Chain-of-Thought Is Rewriting Citation Order — And Your Brand Is Probably Last in Line

Three hidden intersections between CoT reasoning chains, context window economics, and RAG double-filtering that most GEO practitioners are ignoring

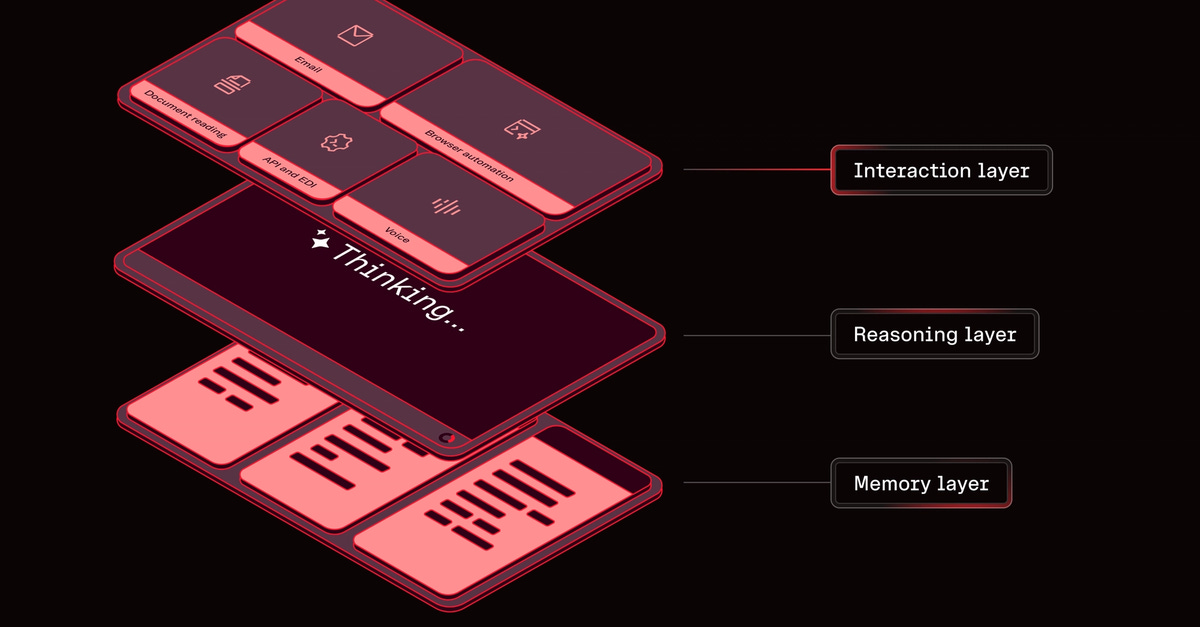

When a user asks an LLM a complex question, the model doesn't just retrieve — it reasons. Chain-of-thought (CoT) prompting changes not just how LLMs answer, but who gets cited during the reasoning steps. Most GEO practitioners are optimizing for retrieval. They're ignoring the reasoning layer entirely. That's a mistake.

CoT-enabled models — which now includes nearly every frontier model in thinking mode — construct answers through sequential reasoning steps. Each step is a citation opportunity. And the brands that show up are the ones whose content is structured as reasoning-compatible evidence, not just keyword-dense paragraphs.

Here are three hidden connections between CoT, context window economics, and RAG that aren't commonly discussed — and how to fix each one.

CoT Creates a Reasoning Layer That Bypasses Your Keywords

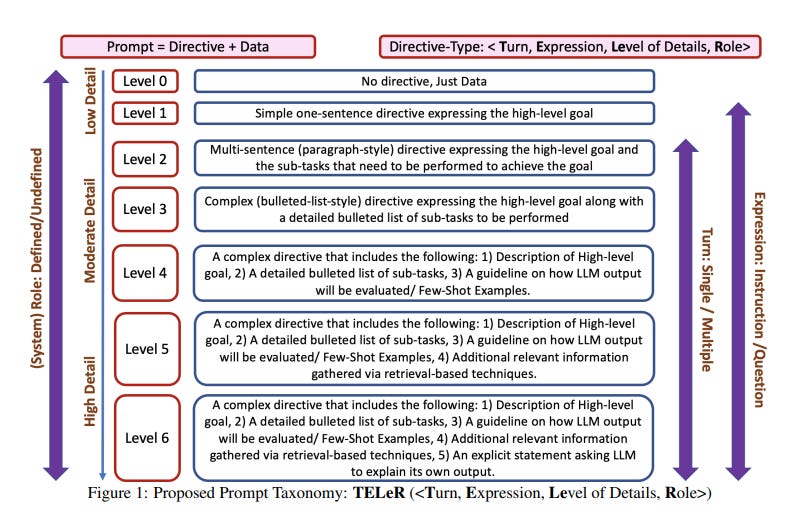

Traditional SEO rewards keyword matching. RAG-based retrieval rewards semantic similarity. But CoT adds a third layer: logical fit. During chain-of-thought reasoning, the model builds an argument — selecting content that advances a logical sequence, not just content that matches the query.

Your brand can have perfect semantic retrieval scores and still get filtered out in the reasoning step, because your content is structured as a conclusion, not as a step in an argument. "LLM Search Console tracks AI brand visibility" is a fact. "To understand why your brand isn't appearing in LLM responses, first measure where it does appear — then trace the retrieval path to find the gap" is a reasoning step. CoT-driven models prefer the latter.

This is the hidden connection most GEO guides skip: retrieval ranking and reasoning ranking are different optimization targets. You can dominate one and fail the other.

Fix: Restructure key content as sequential reasoning. Use "because," "therefore," and "which means" as structural connectors — not just for readability, but because they map directly to CoT reasoning patterns. Every content block should advance an argument, not just state a feature.

The Context Window Budget Problem Nobody Measures

Extended CoT reasoning is token-hungry. Models like Claude or o3 in thinking mode can consume thousands of tokens before generating the final answer — from the same context budget as your RAG-retrieved chunks.

Here's the squeeze: when a model runs a long reasoning chain, it competes with retrieved content for context space. Shorter, denser brand mentions survive context compression better than verbose product descriptions. If your GEO content is long and narrative, it may get truncated exactly when the model needs room to think.

There's a second effect nobody talks about: citation drift. As the reasoning chain evolves across steps, the model may start with your brand in context but — by step 6 or 7 — pull in more logically-connected competitor content. You were retrieved. You were not cited. That's zero-credit visibility, and almost no tooling tracks it today.

Fix: Monitor not just whether your brand is retrieved, but whether it survives the full reasoning chain. LLM Search Console tracks citation patterns across thinking-mode vs. standard-mode queries, letting you see exactly where in the reasoning sequence your brand drops out — without manually testing dozens of model configurations.

RAG + CoT = A Double Filter You're Optimizing for Half Of

Most GEO practitioners ask one question: "Does my content get retrieved?" That's one filter. But in CoT-augmented pipelines, there's a second filter: "Is my content actually used in the reasoning chain?"

These two filters have very different optimization targets:

RAG retrieval rewards semantic relevance, recency, and source authority

CoT reasoning rewards logical structure, argument completeness, and claim specificity

You can pass RAG retrieval and fail CoT reasoning. The brands winning in CoT-heavy pipelines aren't necessarily the most authoritative — they're the ones whose content answers why and how, not just what. Technical explainers, structured case studies, and step-by-step comparisons perform disproportionately well in reasoning chains.

Fix: Audit your content for argument density, not just keyword density. Every key page should have a claim, evidence, and implication structure. That's the basic unit of CoT-compatible content — and it's rarely what marketing teams produce naturally.

What Thinking Mode Does to Your Share of Voice

When models enter thinking mode, they self-correct more aggressively. That sounds like a feature. For brands, it's a risk: if your content appears in a retrieved chunk alongside an outdated claim or a factual inconsistency, the model's reasoning chain may flag it and actively deprioritize your brand in the final response.

This is hallucination rate meets CoT self-correction. In standard mode, the model might simply repeat your brand mention. In thinking mode, it interrogates every claim against its internal priors. Brands with stale, contradictory, or over-claimed content get penalized more in thinking mode than in standard mode — a gap almost nobody is measuring systematically.

The direct consequence: your Share of Voice in thinking-mode queries may be dramatically different from your SOV in standard-mode queries. If you're only running one query type in your monitoring stack, you have a structural blind spot in your AI visibility data.

Fix: Track SOV separately for thinking-mode and standard-mode responses. LLM Search Console provides cross-model citation tracking that makes this comparison possible without manual prompt testing — letting you isolate exactly which content is triggering reasoning-layer penalties.

Quick Wins for GEO (CoT Edition)

Restructure landing pages with claim → evidence → implication flow

Add reasoning connectors: because, therefore, which means, this implies

Shorten brand description snippets to survive context window compression

Audit content for factual inconsistencies that trigger CoT self-correction penalties

Track thinking-mode vs. standard-mode citation rates as separate metrics

Use LLM Search Console to map citation drop-off across reasoning steps and model variants

The brands that will win in AI search aren't the ones with the best keywords or even the best RAG retrieval scores. They're the ones who understand that LLMs don't just retrieve — they reason. And reasoning has a different rulebook entirely.