Context Windows Are Your Real GEO Bottleneck

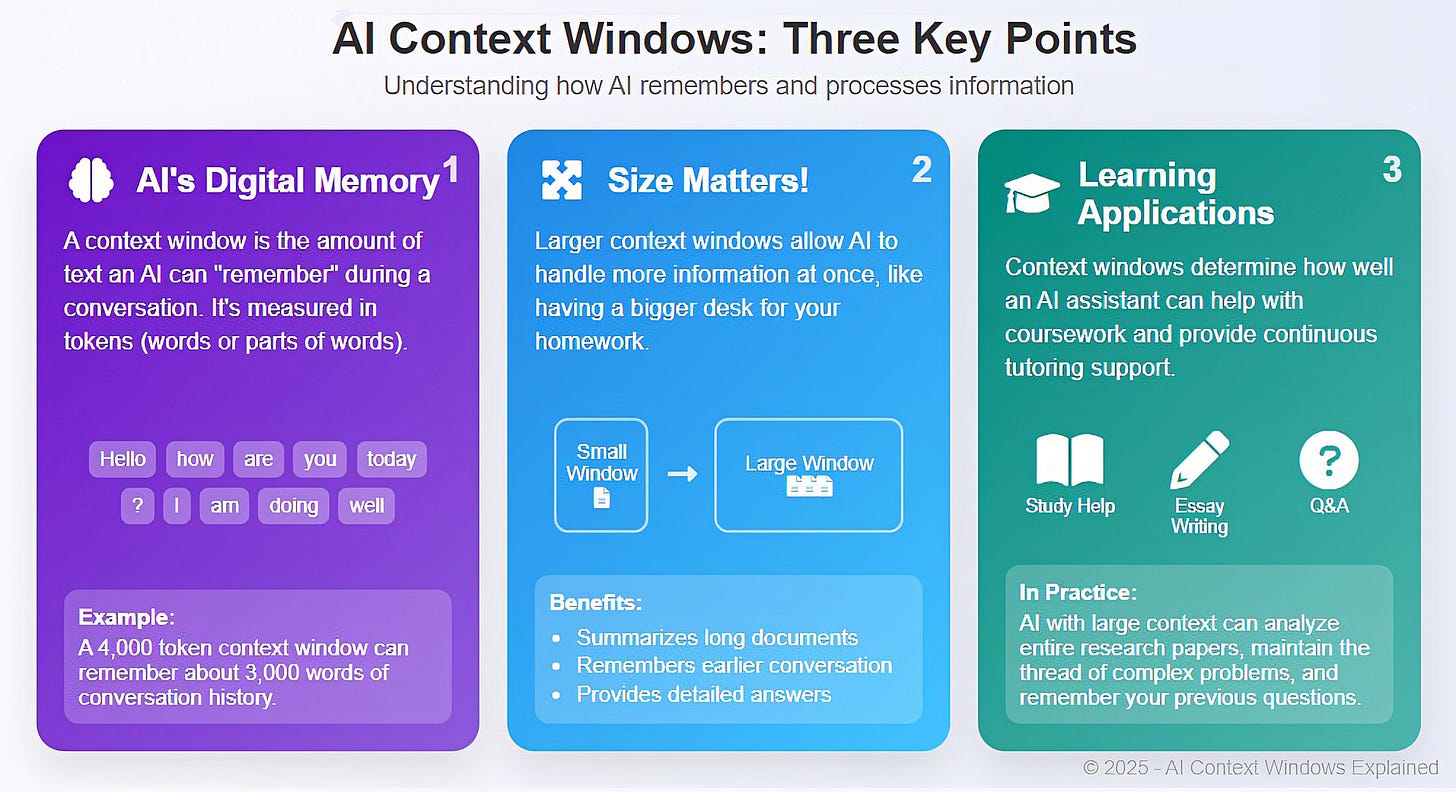

The obsession with context window size in LLM comparisons misses a critical GEO truth: what matters isn't how big your context window is—it's how much of it you're actually using.

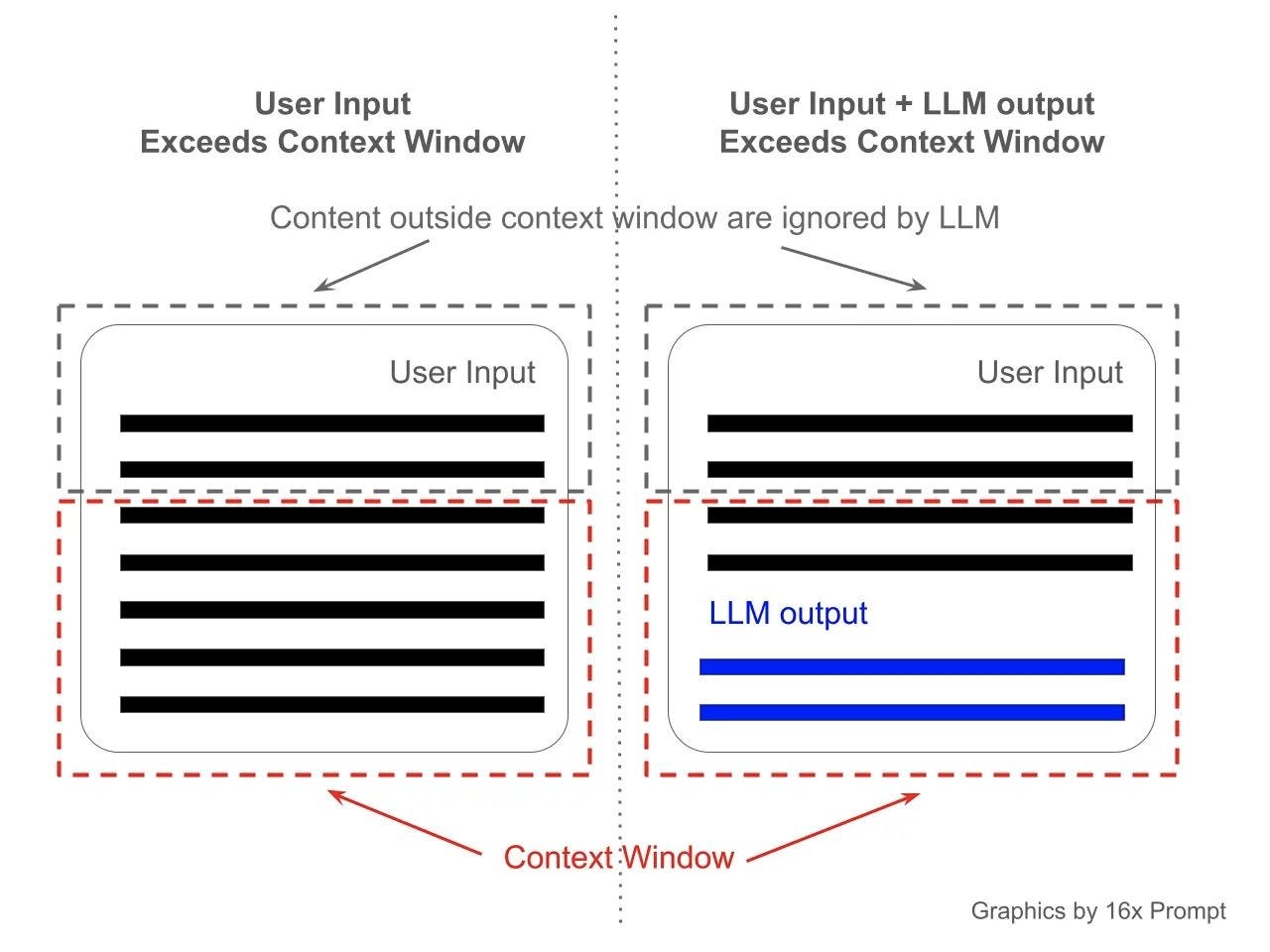

Every function definition, every RAG chunk, every system prompt trades away the reasoning space available for generating accurate answers in zero-click results. Your competitor with a 4K context window and surgical token efficiency is outranking your 128K model bloated with unnecessary grounding.

The Context Tax Nobody's Tracking

Function Calling and MCP protocol implementations eat into your effective context window. A weather function might cost you 200 tokens. Add 5 more integrations—that's 1K tokens gone before your model even sees the user query. Multi-Agent Orchestration makes this worse: each agent needs its own system prompt, knowledge graph fragments, and function definitions. You're burning context budget on infrastructure that doesn't directly improve answer quality.

GEO practitioners optimizing for inference traffic need to measure the "context tax"—the gap between nominal context size and what's left for actual grounding. Claude Opus with 200K context running heavy MCP stacks might effectively have less reasoning space than a 32K model with lean function definitions.

Grounding vs. Hallucination—A False Trade

RAG-based GEO strategies assume more grounded context = lower hallucination rate. Wrong. If your knowledge graph takes up 60% of your context window, you've left your model 40% to generate novel comparisons, synthesize data, and handle edge cases. That compression forces shortcuts. System 2 Thinking and Chain-of-Thought reasoning both require breathing room.

The hidden connection: token efficiency within a fixed context window determines whether your entire knowledge graph can fit before you hit the ceiling. If you can't fit your full product knowledge base, your brand accuracy drops in multi-turn conversations—exactly where LLM answer engines are winning the Share of Voice from traditional search.

Share of Voice Lives in Token Efficiency, Not Model Size

Zero-Click Results favor models that synthesize multiple sources under tight token budgets. A model with a 200K context window but wasteful prompting will lose to a smaller competitor that packs meaning into fewer tokens. Fine-Tuning and LoRA implementations that reduce model redundancy actually improve GEO outcomes better than raw parameter count.

This is where Quantization becomes invisible advantage territory. A quantized model running on cheaper infrastructure with stricter token budgets forces discipline in what gets encoded. Developers building AEO strategies should be measuring inference latency against token-per-second efficiency, not just context window size.

The Unspoken War: Perplexity as a GEO Metric

Perplexity measures how well a model predicts the next token given your knowledge base. Lower perplexity = better grounding. Higher perplexity within your context window might mean your model is struggling with the compression tax. This isn't tracked in most GEO audits.

Answer engines optimize for both accuracy and latency. A model with test-time compute (thinking harder before responding) might win on accuracy but lose on speed. The GEO winners are finding the sweet spot: just enough reasoning depth to crush hallucination rate without blowing token budgets.

Quick GEO Wins

1. Audit your "context tax"—measure tokens burned on function definitions, system prompts, and RAG chunks. Target 40% actual grounding, 60% reasoning space.

2. Run perplexity tests against your knowledge graph. If perplexity jumps when you add RAG context, your compression tax is too high.

3. A/B test quantized vs. full-precision models for identical GEO tasks. Measure latency + accuracy tradeoffs.

4. Switch your north star metric from "maximize context window" to "minimize tokens-per-accurate-answer".