Grounding Is Quietly Deleting Your Brand From AI Responses

Three hidden ways better grounding mechanics degrade unverified brand visibility — and a data-driven fix using LLM Search Console

Your brand is probably getting mentioned by ChatGPT right now. Some of those mentions are hallucinated. As models ship better grounding pipelines, hallucinated mentions disappear. If you're not tracking which type of mention you have, you're celebrating a visibility score that's about to collapse.

What Grounding Actually Does to AI Responses

Most engineers know grounding as "RAG but for production." The reality is more nuanced. Modern LLMs use grounding to anchor generated text to verifiable external sources — web results, knowledge bases, tool outputs — before committing to a response. When grounding is active, the model retrieves, re-ranks, and conditions its generation on retrieved passages.

The implication for brand visibility: grounded mentions and hallucinated mentions look identical in a raw AI response. ChatGPT might recommend your SaaS in the same sentence whether it retrieved your documentation or confabulated it from training weights. The difference only surfaces when you examine citations.

This is why citation tracking isn't optional. It's the only signal that distinguishes a real grounded mention from a hallucinated one. No citations attached to a brand mention? That's a flag, not a win.

The Hidden SOV Decay Curve Nobody Is Measuring

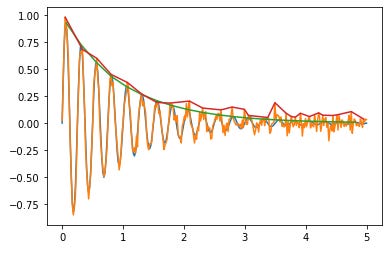

Here's the uncomfortable math. As grounding quality improves across model generations — GPT-4o → o3 → future architectures — models become progressively less tolerant of responses they can't source. Hallucinated recommendations get filtered. Confabulated brand attributes get corrected against retrieved evidence.

If a significant portion of your current AI Share of Voice (SOV) is ungrounded, model updates are silently eroding it. You won't notice it in organic traffic metrics. You'll notice it six months later when your AI mention rate has dropped 40% with no change in your content output.

The brands winning long-term aren't just getting mentioned more — they're building citation infrastructure that survives grounding improvements: third-party reviews, structured documentation, industry roundups, and developer forum discussions. These are the retrieval anchors that make your brand grounding-stable across model generations.

The Selective Grounding Problem for your brand

Not every query triggers the grounding pipeline. Grounding adds latency — retrieval plus reranking costs tokens and wall-clock time — so models make selective decisions about which queries warrant it. High-stakes factual queries like "what's the best tool for X" almost always trigger grounding. Casual conversational queries frequently don't.

This creates an inference traffic asymmetry that almost no one is tracking: the queries most likely to drive purchasing intent are the queries most likely to expose your grounding vulnerabilities.

If you're measuring only raw mention rates, you're averaging grounded and ungrounded responses across all query types. That average hides the signal. You need prompt-level visibility data — which specific queries mention your brand, which don't, and crucially, which citations appear alongside those mentions. That's not something you can surface by manually querying ChatGPT once a week.

How to Audit Your Brand's Grounding Health

A grounding audit has three components you can run today:

1. Citation Coverage Rate. What percentage of your AI mentions come attached to an actual source URL? A brand with 80% mention rate but 10% citation rate has a fragile visibility profile. Grounding improvement will disproportionately hit brands in this category. Track this per model — Claude grounds differently than Gemini, and Perplexity's retrieval architecture is different again.

2. Source Diversity Score. Are the same 2–3 URLs driving all your citations, or do you have distributed citation sources across multiple domains? A single-source brand is one URL deprecation away from a visibility collapse. Healthy grounding profiles have citation spread across 10+ distinct root domains.

3. Prompt-Level Grounding Variance. Run the same prompt across multiple models. If your brand appears in ChatGPT but not in Claude or Perplexity for the same commercial query, that's a grounding signal — one model's retrieval layer found you; others couldn't. That gap is your GEO action item.

LLM Search Console runs this analysis automatically. It tracks mentions across ChatGPT, Claude, Gemini, and Perplexity simultaneously, logs every citation URL and snippet, and gives you prompt-level breakdowns so you can see exactly where your grounding holds and where it fails. Setup takes under five minutes.

Quick Wins for GEO

Audit citations before creating new content. Find out which existing URLs are being cited by AI models. Double down on those pages — structure, update, and amplify them before writing anything new.

Target structured query formats. Queries with "best X for Y" and "how to choose X" formats trigger grounding more consistently than vague informational queries. Map your prompt tracking to these high-intent patterns first.

Monitor per-model, not in aggregate. Grounding pipelines differ by model. A citation win in ChatGPT doesn't transfer automatically to Claude. Aggregate metrics mask the per-model reality.

Track weekly, not monthly. Grounding improvements ship with model updates — sometimes silently. Monthly visibility snapshots miss the decay events entirely.

Treat zero-citation mentions as technical debt. Every hallucinated mention is a liability on your GEO balance sheet. Use citation data to identify and fix the coverage gaps before the next model update does it for you.