Grounding: The Silent Variable Destroying Your LLM Brand Visibility

Why your hallucination rate IS your brand risk score—and how to fix it

Most teams optimizing for LLM visibility are focused on the wrong layer. They're tweaking meta descriptions, adding FAQ schema, and chasing citations—while the actual mechanism that decides whether an LLM mentions your brand accurately (or at all) operates one level deeper: grounding.

Grounding is the process by which an LLM anchors its output to external, verifiable reference sources rather than relying on compressed training weights. In RAG pipelines, it's the retrieval step. In tool-augmented agents, it's the function call. In base generation, it's whatever's in the context window at inference time. And it turns out grounding has three non-obvious implications for brand visibility that almost nobody is talking about.

Hidden Connection #1: Being a Grounding Source = Owning the Zero-Click Result

Here's the uncomfortable truth about LLM-powered search: the brand that becomes the grounding source for a query doesn't just get cited—it becomes the answer. This is structurally different from traditional SEO's "position zero." When a model grounds its output in your documentation, your data, or your entity definition, you're not competing for a snippet. You're the substrate the model builds on.

Zero-click results in traditional search were annoying—you got visibility but no traffic. In LLM search, being the grounding source is the only position that matters. Every model that cites you is routing inference through your content. Every model that doesn't is routing around you.

The implication: your GEO strategy shouldn't just aim for citation frequency. It should aim to make your structured content the canonical grounding layer for your category. That means publishing authoritative, linkable, machine-readable data—not just blog posts.

Hidden Connection #2: Your Hallucination Rate Is Your Brand Risk Score

When developers talk about hallucination rates, they mean the percentage of model outputs that contain factually incorrect statements. But for brand teams, there's a more specific version of this problem: brand-specific hallucinations—cases where a model confidently states wrong facts about your company, product, or team.

This isn't a model quality issue. It's a grounding gap. If the models pulling your brand data have no reliable retrieval source for accurate entity facts, they fill the gap with plausible-sounding confabulations. Your founding year, your pricing, your feature set, your competitors—all vulnerable.

The inverse holds too: brands with well-grounded entity data in high-retrieval-frequency sources show measurably lower hallucination rates in LLM outputs. This is the real mechanism behind why structured data, authoritative citations, and entity consolidation matter—they're not just SEO signals, they're grounding anchors that reduce the probability of the model going off-script about your brand.

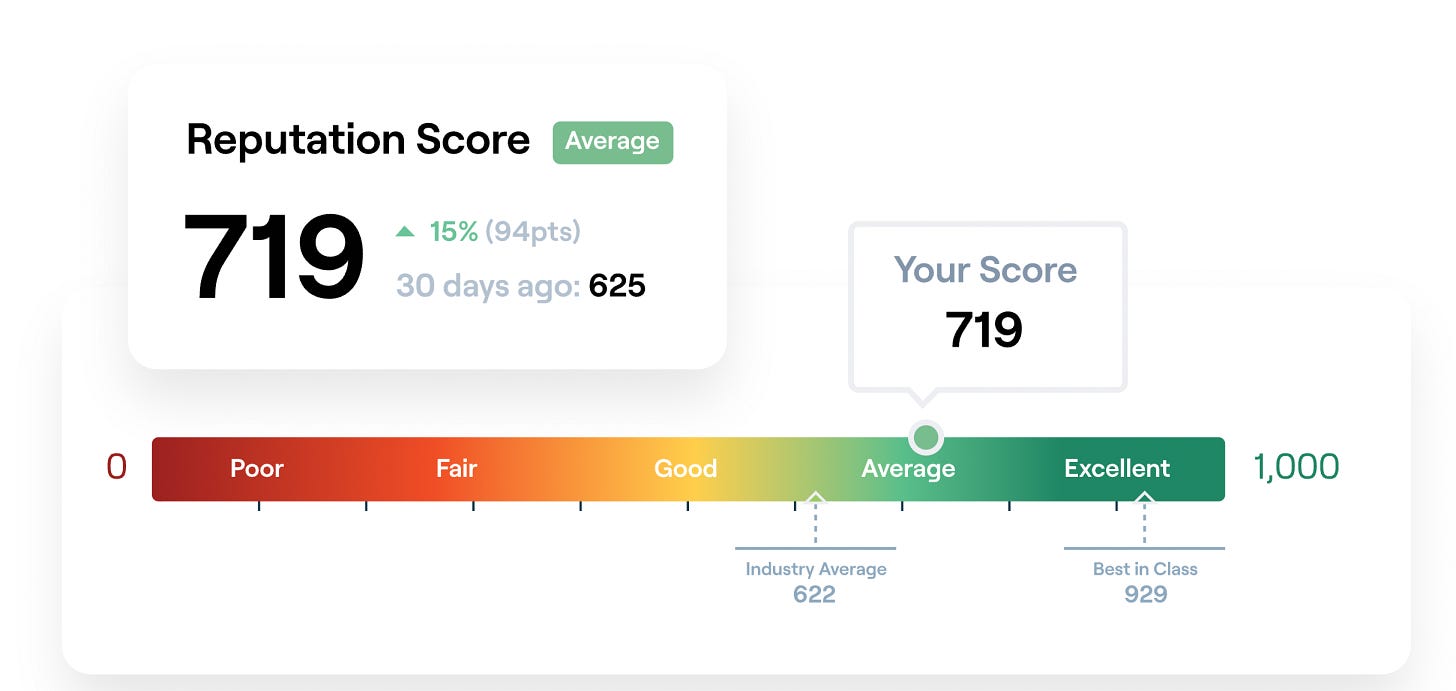

LLM Search Console tracks exactly this: what models say about your brand, how often it's accurate, and where the grounding gaps are. It's the closest thing the market has to a real-time hallucination audit for your brand's presence across LLMs.

Hidden Connection #3: The Gap Is Your GEO Window

The inference gap is the delta between what a model knows from training and what it can retrieve at inference time. For GPT-4o with a knowledge cutoff and no retrieval, the gap for recent events is large. For a Perplexity-style system with live web retrieval, the gap collapses toward zero.

Most brands treat their LLM visibility as a training-data problem—a slow, expensive, indirect process of getting into future model versions. But the inference gap reveals a faster lever: retrieval-time content. If your brand has high-quality, structured, frequently-retrieved content at inference time, models will ground on it regardless of what's baked into their weights.

This is the real mechanism behind GEO. You're not trying to retrain models. You're trying to be the highest-quality source at retrieval time, so that when a model needs to ground an answer about your category, it pulls your content. The inference gap is your window. Systems like LLM Search Console let you measure whether you're actually making it through that window—or getting filtered out in favor of competitors.

GEO: Exploiting the Grounding Layer

Stop optimizing for impressions and start optimizing for grounding frequency. Here's what actually moves the needle:

Publish structured entity data. JSON-LD, llms.txt, and machine-readable product specs are grounding anchors. A clean entity definition beats 10 blog posts.

Audit your brand hallucination rate first. Use LLM Search Console to query ChatGPT, Perplexity, Gemini, and Claude with brand-specific prompts. Track what they get wrong. That's your baseline.

Consolidate authority signals. Citations from high-retrieval domains (Wikipedia, authoritative press, technical docs) increase your probability of being the grounding source. Fragmented, low-authority links don't accumulate retrieval weight.

Monitor inference traffic, not just mentions. Knowing your brand is mentioned isn't enough—you need to know which prompts trigger it, which models surface it, and whether the retrieved context is accurate. That's LLM Search Console's core workflow.

Close the inference gap with fresh, crawlable content. Models with retrieval capabilities prioritize recent, high-authority sources. Publishing dated, uncrawlable PDFs or behind-login docs means the inference gap stays open—and competitors fill it.

Grounding is the layer that connects training-time knowledge to inference-time output. Get it right and you're not just visible in LLMs—you're the source they build on. Get it wrong and you're invisible at best, hallucinated at worst.

The brands winning LLM visibility in 2026 aren't writing more content. They're engineering better grounding surfaces. Start with what models actually say about you: llmsearchconsole.com.