Model Context Protocol Is The Breakthrough That Agents Have Been Waiting For

Why standardized integrations will finally make AI agents operational in 2026

For three years, we've watched agents fail spectacularly at scale. They hallucinate API calls. They parse vendor-specific documentation as context instead of reasoning. They spend 40% of their token budget just explaining integration errors.

The problem was never the base model or the reasoning capability. It was integration hell.

Enter Model Context Protocol (MCP) — the open standard that finally solved it.

If you're building for GEO or deploying agents in production, MCP is no longer optional. It's the foundation layer that separates viable agent systems from expensive toys.

What MCP Actually Does (Beyond the Hype)

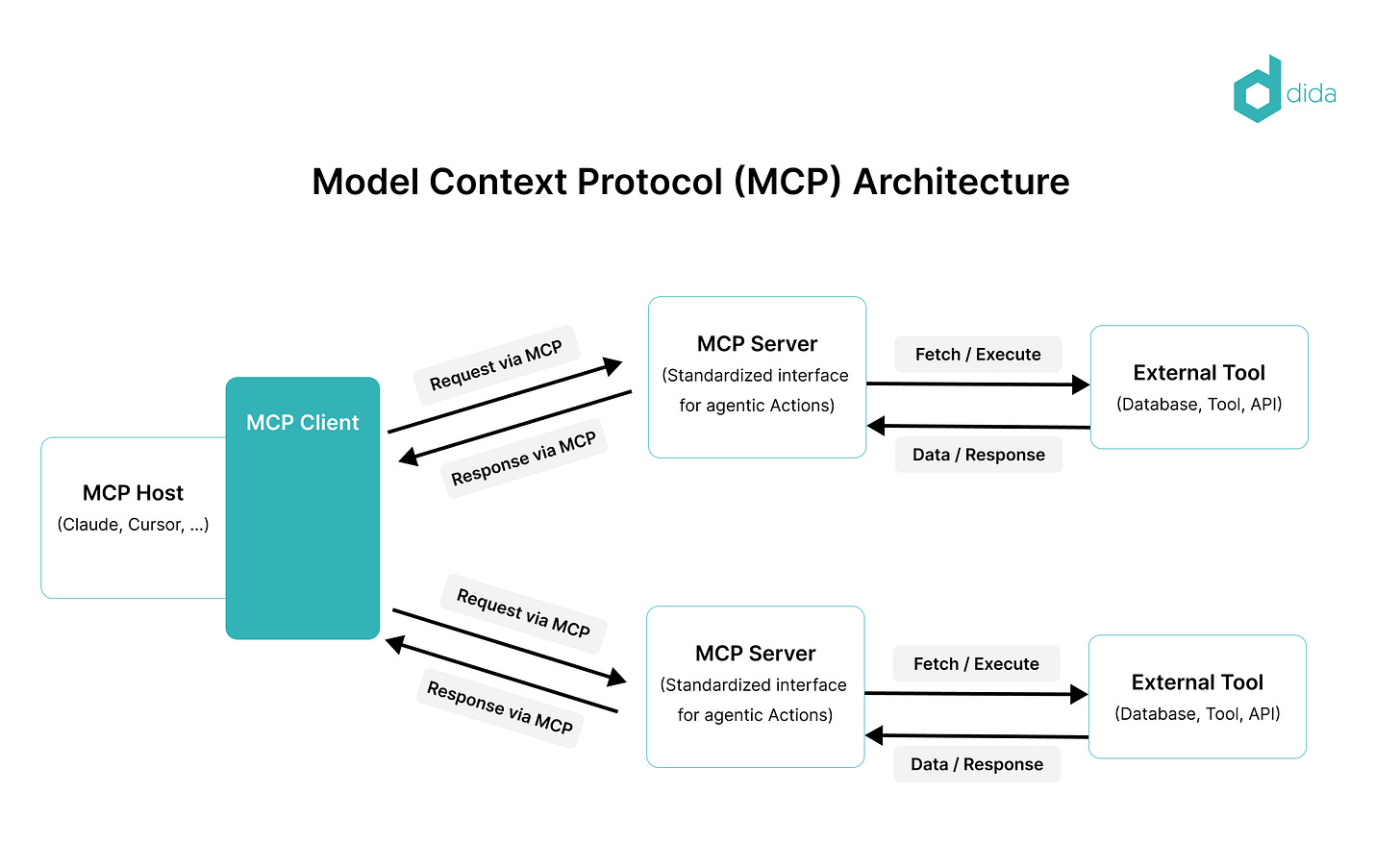

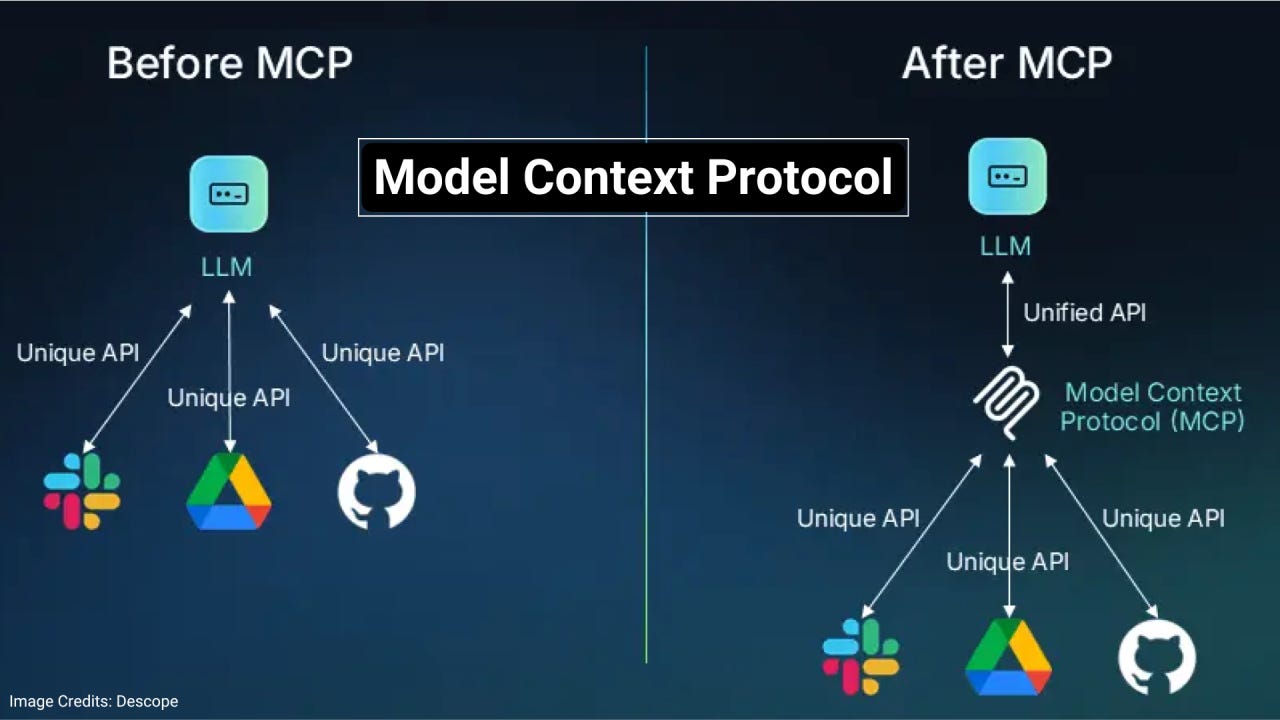

MCP standardizes how AI agents connect to external resources — files, databases, APIs, vector stores, real-time feeds. Instead of each agent coding custom integrations (or worse, trying to use the tool blindly), MCP provides a contract-based architecture.

Translation: Your agent calls mcp://anthropic/read-file instead of parsing Anthropic's API docs and guessing the right endpoint. The agent doesn't hallucinate. The integration doesn't break with API version changes.

For teams running multiple agents, this reduces integration code by ~70%. For teams with compliance requirements, this moves integration logic from the model context window to the infrastructure layer — huge win for safety and cost.

Hidden Connection #1: MCP + Agentic Workflows + GEO/AEO

Here's what nobody's talking about: MCPs enable real-time answer engine monitoring without building a separate monitoring stack.

Agents can now natively query search engines, evaluate their own "share of voice" in AI answers, and adjust reasoning strategies mid-response. Instead of batch GEO audits, you get live feedback loops.

Your agent runs a search query through MCP, receives the SERP data, evaluates where your brand ranks in AI-generated answers, and optionally calls another tool to fetch your own content and inject it. All within the same reasoning step.

This is operational GEO. Not aspirational.

Hidden Connection #2: MCP + Knowledge Graphs + Function Calling

When MCPs standardize how tools are called, Knowledge Graphs become the natural "schema" for organizing those calls.

Instead of: "Here's a giant list of 47 available functions — figure out which ones to use," you get: "Here's an entity graph that shows relationships between tools, data sources, and outcomes. Follow the edges."

This unlocks multi-hop reasoning without hallucination. Your agent doesn't randomly guess which functions to chain together. It traverses a knowledge graph where each edge represents a valid reasoning path.

The result? Agents that actually understand when they're out of scope instead of confidently making things up.

Hidden Connection #3: MCP + Token Efficiency + RAG

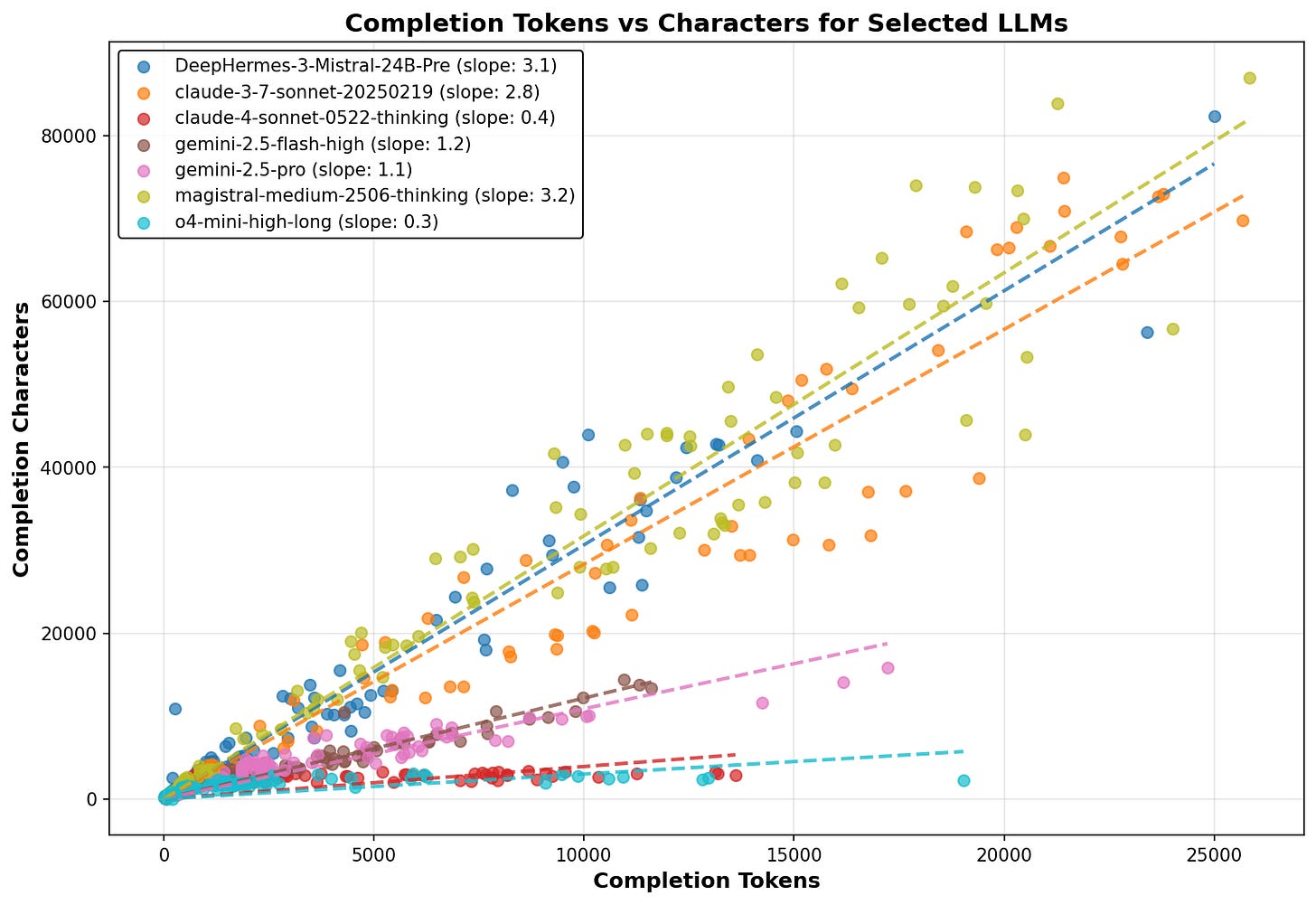

Here's the painful truth: RAG systems waste tokens on integration overhead.

Your agent receives a vector search result, but then spends tokens parsing API docs, handling error responses, retrying failed calls, and explaining rate limits to the model. The "actual work" (reasoning over the retrieved data) is maybe 40% of the token budget.

MCPs collapse this overhead. Standardized, predictable tool calls. No parsing required. No error handling in the prompt.

This makes hybrid RAG + vector search actually affordable at scale. You can run more retrieval steps within the same token budget, improving grounding without burning compute.

Quick Wins for GEO Practitioners

1. Monitor AI Answer Gaps Programmatically: Build an MCP server that connects to search APIs. Your agent can now fetch answer engine results in real time and identify where your brand is missing.

2. Dynamic Content Injection: Use MCPs to grant agents read access to your knowledge base and website. They can evaluate retrieval quality, flag hallucination risks, and inject verified content into answers.

3. Multi-Step GEO Audits: Chain multiple MCP tools — web search + knowledge graph + content store — and run complex queries that used to require manual work.

4. Cheaper Evals: Build an MCP server for your evaluation dataset. Run consistency checks, grounding audits, and citation accuracy tests without rewriting the agent each time.

MCP isn't a feature drop. It's the infrastructure shift that moves agents from research projects to production systems.

Start by mapping your integration points. Build one MCP server. Then watch the cost-per-operation drop and the operational reliability climb.

The agents that dominate 2026 won't be the ones with the biggest base models. They'll be the ones with the best infrastructure.