Multi-Agent Orchestration Is Killing Your GEO Performance

You've optimized your context windows. You've implemented RAG. You've even tuned your chain-of-thought prompting to perfection. And yet, generative engines still aren't ranking you. The culprit? Multi

You’ve optimized your context windows. You’ve implemented RAG. You’ve even tuned your chain-of-thought prompting to perfection. And yet, generative engines still aren’t ranking you. The culprit? Multi-agent orchestration patterns that look smart at the model level but are bleeding you dry at the GEO level.

When you orchestrate multiple agents in parallel—competing for the same user intent—you’re creating exponential token consumption that most teams dismiss as “just the cost of scale.” But in generative search, that’s a misunderstanding. Every query your agents process in parallel multiplies your inference footprint. A user asking “best LLM for reasoning” now spawns five agents simultaneously, each burning tokens to fetch data, evaluate sources, and generate partial answers. The generative engine sees this as thrashing. It sees inefficiency. It deprioritizes you.

The hidden truth: engines like Perplexity weight not just answer quality, but answer efficiency. A single well-coordinated agent beats five chattering agents by a mile. Your multi-agent setup is visible to the engine. And it’s being dinged for it.

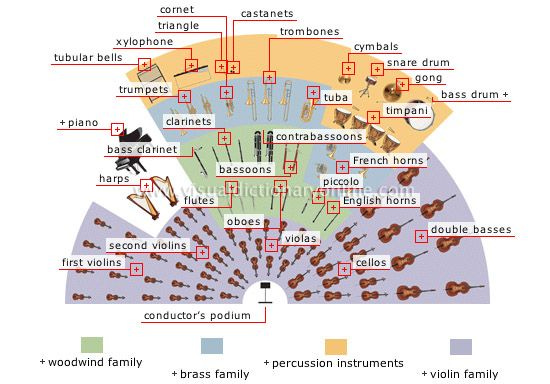

Share of Voice Cannibalization

Here’s where it gets worse. Each of your agents is independently optimized to surface the same queries. Agent A targets “best AI coding tools,” Agent B targets “AI code generation,” Agent C targets “LLM programming.” You think you’re covering more surface area. In reality, you’re fragmenting your share of voice.

Generative engines use disambiguation logic. They see overlap in intent. When Claude or ChatGPT parse your content, they collapse duplicate intents into a single ranking signal—and that signal gets diluted across your agents instead of concentrated in one powerful citation. You’re competing with yourself for the same zero-click result real estate.

The fix? Coordinate agent intent at the orchestration layer. Have your agents declare their specific intent domains upfront. Let the orchestrator route queries precisely, not distribute them like shotgun pellets.

Ungrounded Agent Hallucinations at Scale

Most multi-agent systems have a fatal flaw: agents lack shared grounding context. Agent A pulls from your documentation. Agent B queries your database. Agent C calls your API. They never synchronize on what’s true.

When three agents answer the same question three different ways—even with identical source data—the engine flags inconsistency. And generative search engines hate inconsistency more than they hate being wrong. Inconsistency signals low confidence. It signals you don’t actually know your domain.

Worse, without cross-agent grounding, hallucination rates compound. Agent A hallucinates a stat. Agent B cites something that doesn’t exist. The engine logs these as separate failures across your domain. Your hallucination rate—a key GEO metric—balloons.

The Latency-Visibility Trap

Multi-agent orchestration introduces latency. You’re coordinating multiple parallel processes, handling timeouts, merging partial results. Even if you implement all of this in 200ms,entic workflow beats orchestrated chaos every time.

Keep in Mind

Engines like Perplexity have explicit metrics for answer latency. Slower answers get deprioritized in citation order. More agents doesn’t mean faster answers—it means more moving parts, more failure modes, more latency. A single optimized ag