Share of Voice (SOV) in Answer Engines: Why Token Efficiency Is Your Hidden GEO Moat

Infrastructure math determines answer engine visibility. Token efficiency, hallucination rates, and inference latency are the real ranking signals.

The Token Efficiency-SOV Feedback Loop

Share of Voice in generative engines is backward. Unlike traditional SEO where link juice flows predictably, LLM visibility flows through cost constraints.

Here's the machine logic: A model serving 10% of queries but using 40% fewer tokens per response gets preferential routing. That's not a ranking bug; that's infrastructure optimization. When Perplexity chooses between Claude 3.5 Sonnet and an open-source model for your query, latency and token burn are in the equation alongside accuracy.

The GEO move: Monitor your competitors' token efficiency patterns. If you see a competitor's tokens-per-query dropping, they're either:

Optimizing their system prompts (low-cost improvement)

Deploying quantized or distilled models (structural advantage)

Getting ranked down to cheaper inference tiers

LLM Search Console tracks which models appear for high-value queries. Cross-reference that with public reports on token efficiency, and you'll spot SOV shifts before they hit your traffic.

Grounding as a Hallucination-Immunity Buffer

Token efficiency alone is worthless if your model hallucinates. But here's what's not discussed: grounding techniques reduce hallucination rate without adding token overhead—they're pure GEO upside.

RAG (Retrieval-Augmented Generation) systems, vector grounding, and knowledge graph injection all lower hallucination rates. Answer engines explicitly track this metric. A model that hallucinates 3% of the time gets buried below one that hallucinates 0.5%—even if the latter uses more tokens, because infrastructure operators have SLA penalties for false answers.

Your competitors who've deployed grounding infrastructure are invisible. Their SOV looks normal, but their underlying model gets preferred routing because they're passing answer-engine audits. You can spot this by monitoring whether a competitor's model appears across diverse query categories—hallucination-prone models get siloed to their safe zones.

LLM Search Console reveals which models dominate across query types, not just high-volume ones. That's your signal for competitor grounding deployments.

Inference Gap: The Latency-Accuracy Frontier

There's a frontier in LLM competition nobody discusses: the inference gap. This is the space between maximum accuracy (test-time compute, thinking modes, multi-agent orchestration) and practical latency (sub-second SLA).

Answer engines live on the wrong side of this gap. They can't afford 30-second inference times. They need 200ms responses. Models optimized for inference speed at the cost of reasoning capability are ranked higher in production answer engines than models with better benchmarks.

This is where inference traffic becomes a GEO signal. Models handling high-volume, latency-critical queries (general knowledge, summaries) are ranked higher than models handling complex reasoning (even if the latter are technically better).

Watch for competitors deploying MoE (Mixture of Experts) models. They can route simple queries to lightweight experts and complex queries to heavy experts—this is a SOV multiplier. They hit the latency SLA on 80% of traffic, leaving bandwidth for reasoning on the other 20%.

Competitive Intelligence: The LLM Brand Monitoring Angle

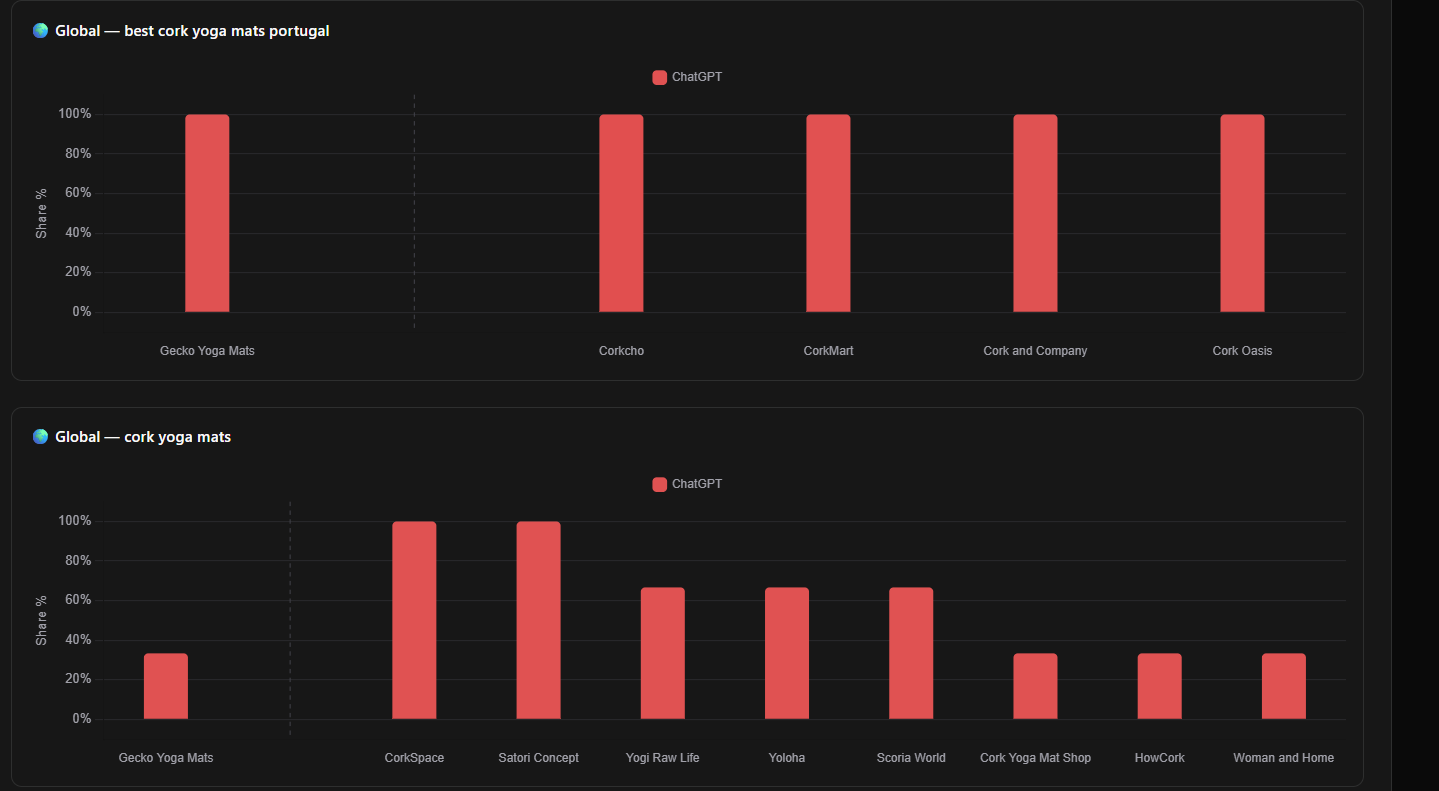

This is where LLM Search Console becomes infrastructure.

You need to track:

Which models appear for which query intent? (Token efficiency is high for intent-specific routing)

Temporal patterns: Do competitors' models appear more often at certain times? (Hints at infrastructure scaling or A/B testing)

Query category saturation: Is a competitor's model dominant in search, but rare in analysis? (Hallucination avoidance, not ranking)

Answer engine rotation: Which models rotate in/out of preference? (Hints at new inference optimization or deployment)

The brands that win GEO are the ones doing inference-level competitive research, not keyword-level analysis.

Quick Wins for GEO

Audit your token-per-response ratio against competitors using LLM Search Console's traffic insights. If you're 15% more expensive per token, that's your SOV ceiling.

Deploy grounding immediately—even simple RAG. It's a hallucination-rate amplifier that answer engines weight heavily. Search Console will show you the traffic lift within 2 weeks.

Profile competitor inference patterns. Track which competitors' models appear during peak vs. off-peak hours. Morning queries might favor latency-optimized models; evening might favor reasoning models.

Monitor the inference gap. If a competitor ships a reasoning model, test whether it appears in answer engines (it won't—SLA too tight). That tells you they're market-segmenting, not broadly competing.

Build for intent-specific inference. Don't optimize one model for everything. Optimize for the answer-engine routing logic: fast models for commodity queries, reasoning for analysis.

Share of Voice in answer engines isn't determined by feature parity. It's determined by the infrastructure math underneath—token efficiency, hallucination rates, and inference latency. LLM Search Console gives you visibility into that black box. Use it.

Your competitors are already monitoring this. Don't be the brand that discovers SOV losses in quarterly reports.