Test-Time Compute Is Rewriting Who Gets Cited in LLM Responses — And Most Brands Are Failing the Reasoning Budget Test

Three hidden connections between reasoning depth, hallucination concentration, and RLHF flywheel effects — and what your brand needs to do before the next model release

When OpenAI shipped o1, most marketing teams read the headline — "better reasoning" — and moved on. They missed the underlying mechanic: extended inference loops that resample, verify, and re-rank source material mid-generation. That mechanic has a direct, measurable effect on brand visibility in LLM responses. Almost nobody is tracking it.

Test-Time Compute (TTC) is the practice of allocating additional computational resources during inference rather than during training. Instead of generating a response in a single forward pass, the model runs multiple internal reasoning cycles — checking its own outputs, simulating alternative chains of thought, and performing implicit retrieval verification before committing to an answer.

The result: o1, o3, Gemini 2.0 Flash Thinking, and Claude Extended Thinking mode all behave fundamentally differently from standard models when your brand comes up. Understanding how they differ is now a core GEO competency.

What Test-Time Compute Actually Does Inside the Model

In standard (non-reasoning) LLMs, a prompt triggers a single autoregressive generation pass. The model samples token-by-token from a probability distribution shaped entirely by training. Brand mentions are a function of training data frequency and recency.

TTC models break this pattern. Before returning output to the user, they run an internal chain-of-thought scratchpad — a hidden reasoning buffer where the model decomposes the query into sub-problems, generates candidate answers and stress-tests them against known facts, flags low-confidence claims for revision or omission, and synthesizes a final verified response.

This scratchpad is invisible in standard API outputs but its effects are fully observable: TTC models produce dramatically fewer hallucinations on well-documented topics, and dramatically different hallucinations on poorly-documented ones.

The implication for brand visibility: your content is now being stress-tested by an internal evaluator, not just pattern-matched. Brands that pass the internal verification cycles get cited. Brands that fail get quietly dropped or — worse — confabulated.

Hidden Connection #1: TTC Concentrates Hallucinations on Thin-Content Brands

Here's the counterintuitive finding that most GEO practitioners miss: Test-Time Compute reduces global hallucination rates but concentrates residual errors on under-documented entities.

The mechanism is straightforward once you see it. During reasoning cycles, the model attempts to verify its own claims by cross-referencing internal representations from training. For well-documented brands — those with Wikipedia articles, structured schema data, multiple third-party citations, press coverage — verification succeeds. The claim survives to the output.

For thin-content brands — those with only a homepage, some press releases, and LinkedIn posts — verification fails. The model has insufficient corroborating signal to confirm the claim. It faces a three-way choice: omit the brand mention entirely (silent erasure), fabricate plausible-sounding attributes (hallucination with confidence), or hedge with uncertainty language that undermines brand authority ("I believe they may offer...").

Outcome 1 is the most common — and the hardest to detect. Your brand doesn't appear wrong; it simply doesn't appear. Zero-click attribution models miss it entirely because there's nothing to attribute.

The fix requires a structural shift: moving from promotional content to fact-dense entity content. Specific numbers. Named personnel with verifiable titles. Dated product milestones. Third-party citations that corroborate your own claims. The reasoning model's internal evaluator scores these independently — your own page is not sufficient self-corroboration.

Hidden Connection #2: The Reasoning Budget Cliff and Your Brand's Disappearing Act

TTC is expensive. A single o3 reasoning query can consume 10–50× the compute of a standard GPT-4o call. Providers manage this through implicit and explicit reasoning budgets — token limits on the internal scratchpad before the model must commit to an answer.

This creates a brand visibility dynamic that is almost entirely unexamined: your brand's LLM visibility is not a flat function — it degrades non-linearly as reasoning depth increases.

Here's how it plays out in practice. At fast inference (no TTC), brands appear roughly proportional to training data frequency — moderately documented brands can rank here. At shallow reasoning (small TTC budget), the model runs 1–3 verification cycles and brands with minimal corroboration start dropping out. At deep reasoning (large TTC budget), the model runs 10+ verification cycles, aggressively pruning low-confidence claims — only brands with dense multi-source corroboration survive.

At the "reasoning budget cliff" — the point at which compute is exhausted — the model defaults to fast-cached knowledge from training weights. Brands not embedded in high-frequency training data disappear precisely when the model is thinking hardest.

This creates a perverse monitoring gap: brands that test their LLM visibility using standard (non-reasoning) API calls see acceptable results, while their actual performance in reasoning-mode queries — the queries that users make for high-consideration decisions — is dramatically worse.

Operationally, this means your GEO monitoring stack must include separate evaluation tracks for reasoning-mode models (o3, extended thinking, deep research modes) versus standard models. They are measuring different phenomena.

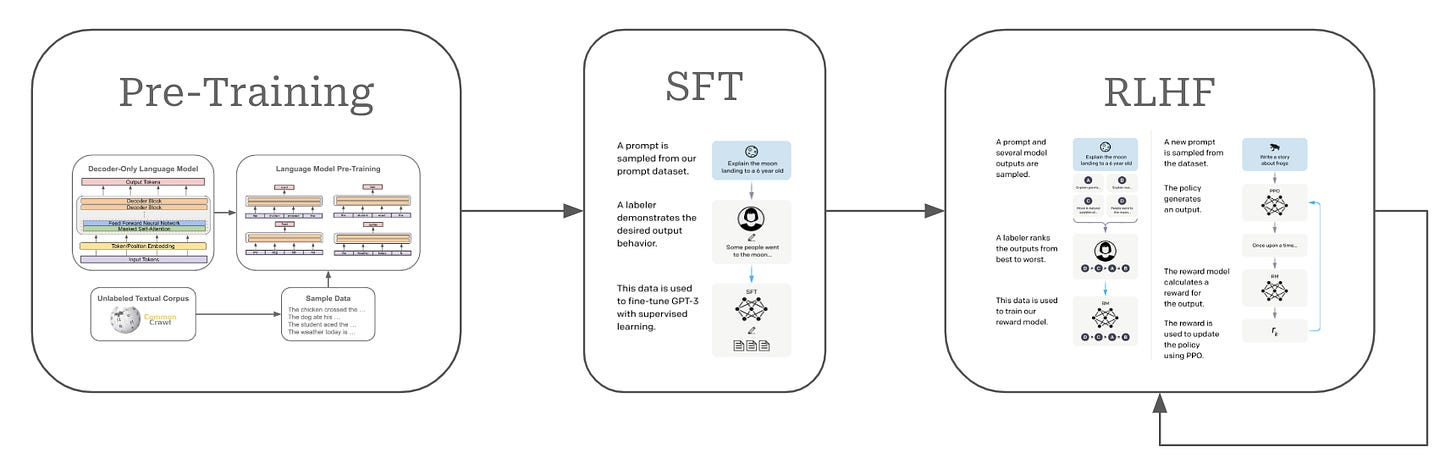

Hidden Connection #3: CoT Traces Become RLHF Data — And Your Brand Is in the Loop

This is the least-discussed feedback mechanism in the TTC brand visibility equation, and it has the largest long-term consequence.

Reasoning models generate chain-of-thought traces before producing final outputs. In leading labs' training pipelines, high-quality CoT traces — especially those that arrive at correct, verifiable answers — are harvested as training signal for subsequent RLHF and Constitutional AI fine-tuning rounds.

The implication: how your brand appears in CoT traces today directly influences how it appears in model weights six months from now.

If reasoning traces consistently cite your brand correctly — associating it with accurate attributes, correct positioning, verified claims — that pattern gets reinforced through RLHF. The model learns that citing your brand in this context produces correct outputs and receives positive reward signal.

If your brand is consistently absent from reasoning traces (because it fails verification cycles, as described above), absence becomes the default learned behavior. Future model versions will default to omitting you not because of training data gaps, but because of reinforced omission patterns.

This creates a compounding flywheel that operates on a 6–12 month training cycle. Brands investing in GEO-structured content now are building positive reinforcement into future model versions. Brands that aren't are accumulating negative reinforcement through omission — and they won't see the damage until the next model release, at which point recovering requires rebuilding the entire corroboration scaffold.

The Share of Voice metric most teams track (% of LLM responses mentioning your brand) is a lagging indicator of this process. By the time SOV decline is observable, RLHF has already encoded the problem into weights.

Quick Wins: What To Do Before the Next Model Release

1. Audit your brand's fact density score. Run your brand through o3 or Claude Extended Thinking with the query: "What are 10 specific, verifiable facts about [brand]?" Compare the output against your actual content. Every gap the model can't fill from external sources is a verification failure waiting to happen.

2. Build multi-source corroboration for your top 5 brand claims. Each factual claim you want LLMs to cite needs a minimum of 3 independent sources: your own structured page, a third-party article, and one structured data source (Wikipedia, Wikidata, or a recognized industry database). Press releases don't count — they're treated as self-citation.

3. Instrument separate monitoring tracks for reasoning vs. standard models. Your current LLM monitoring stack is almost certainly measuring fast-inference models only. Add weekly probes using o3-mini (or the cheapest available reasoning tier) to establish a separate reasoning-mode baseline. The delta between standard and reasoning performance is your TTC vulnerability score.

4. Prioritize schema.org entity markup on all factual content pages. Structured data is the closest approximation to "pre-verified" content from a TTC model's perspective. schema.org/Organization, schema.org/Product, and schema.org/ClaimReview are the highest-signal types for brand corroboration during reasoning cycles.

5. Time your content updates to align with known training cutoffs. TTC models don't retrieve live web content — they operate on training-time knowledge. Publishing GEO-optimized content in the 6–9 month window before a likely training cutoff maximizes the probability that your improved content enters RLHF pipelines before the next weights update.

Test-Time Compute isn't a niche research concept. It's the inference architecture running behind the AI tools your prospects use for high-consideration decisions. The brands that understand this mechanic now will be disproportionately visible in reasoning-mode LLM outputs. The brands that don't will keep optimizing for a surface — standard inference — that is being rapidly deprecated for anything that actually matters.