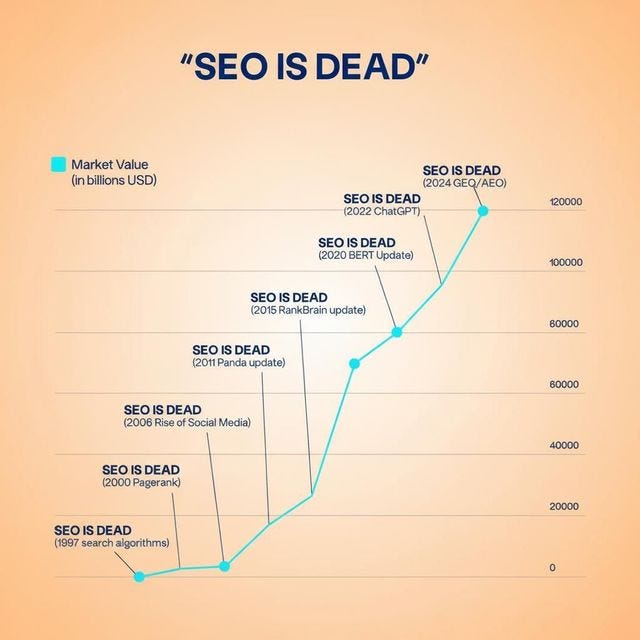

The SERP Is Deprecated. Ship Your GEO Stack Before Your Brand 404s.

Traditional search is bleeding out. Inference Traffic is the only vital sign that matters. Here's your hotfix.

Pull up your analytics. See that 40% drop in organic search traffic? That's not a Google algorithm penalty. That's structural decay — the slow, quiet deprecation of the traditional SERP as your primary distribution channel.

Here's the git blame on what happened: ChatGPT, Gemini, Perplexity, Grok, and Claude are now answering queries directly. No clicks. No visits. No conversions — unless your brand is the answer. The query never leaves the inference layer.

Welcome to Inference Traffic: the mentions, citations, and recommendations that happen inside LLM context windows — invisible to Google Analytics, untracked by Search Console, and completely outside every legacy SEO dashboard you're paying for.

Old SEO vs. LLM SEO: A Schema Diff

Think of traditional SEO like a monolithic SQL query that only runs on one database — Google's index. GEO (Generative Engine Optimization) is a distributed query across multiple inference engines simultaneously. Here's the schema diff:

Goal: Old SEO → rank on Page 1 | LLM SEO → get cited in AI responses

Signal: Old SEO → backlinks, keywords | LLM SEO → structured data, entity coverage

Metric: Old SEO → impressions, CTR | LLM SEO → inference mentions, citation rate

Crawl target: Old SEO → Googlebot | LLM SEO → AI training scrapers + live RAG

Failure mode: Old SEO → algorithm update | LLM SEO → inference gap (your brand = null)

That last failure mode is the one that kills companies. An inference gap is when an LLM answers a question in your category — and your brand isn't mentioned. Not because you have bad content. Because you're optimized for a system that's losing market share.

What Is "Inference Traffic" and Why It's Not Optional

Think of it like this: your website's architecture used to matter for indexing. Now it matters for inference. A poorly structured site is like a badly normalized database — the joins fail, the queries time out, and the LLM's RAG pipeline returns your competitor instead of you.

Inference Traffic = every time an AI model mentions, recommends, or cites your brand in response to a user query. This happens:

In ChatGPT when someone asks "what's the best tool in your category?" In Perplexity when a buyer is doing pre-purchase research. In Claude during agentic workflows where the AI autonomously selects tools. In Gemini when it's augmenting a Google search with AI Overviews.

Each of these is a touchpoint you can't see — unless you're measuring it.

Enter LLM Search Console: Your GEO Source of Truth

LLM Search Console is the instrumentation layer your GEO stack is missing. Think of it as console.log for your brand's AI visibility — but production-grade.

Where legacy tools track keyword rankings, LLM Search Console tracks inference mentions across the major AI engines. It answers the questions that matter right now:

Is your brand appearing when users query your problem space in ChatGPT? Are you getting cited in Perplexity's source panel, or is your competitor eating your lunch? What's your share-of-voice in AI-generated answers vs. your category baseline?

This isn't fuzzy sentiment analysis. It's structured, queryable data — the kind you can cross-reference with your content calendar and use to close specific inference gaps before your next product launch.

Quick Wins: Your 30-Minute GEO Patch

You don't need to rebuild your entire content stack today. Here's a "hotfix" list you can ship before standup:

1. Create or optimize your llms.txt file

Add a root-level llms.txt to your site. This is the emerging standard for signaling to AI crawlers what your site is about, your entity context, and your preferred citation format. Structure it like a well-commented config file.

2. Audit your top 5 category queries in LLM Search Console

Run your most important buyer questions through LLM Search Console. If your brand isn't appearing, you have an inference gap. Document it. Fix it in content.

3. Add entity markup to your homepage and product pages

LLMs don't "read" pages — they ingest structured entity relationships. Schema.org Organization, Product, and FAQPage markup improves your token efficiency in RAG pipelines.

4. Publish a technical FAQ that matches AI query patterns

AI engines love direct, structured answers. A well-formatted FAQ isn't just good UX — it's a context window injection point.

5. Check your citation decay rate weekly

50% of content cited in AI responses is less than 13 weeks old. Set a recurring LLM Search Console check and treat stale citations like you'd treat broken tests in CI.

The Bottom Line

The SERP isn't going away tomorrow. But its marginal value per query is declining every quarter. If you're still measuring success purely by organic rankings, you're optimizing for a deprecated runtime.

Inference Traffic is where your buyers are now. LLM Search Console is the only way to see it clearly.

Patch your stack. Track your mentions. Close your inference gaps.

Or watch your competitor get cited instead of you.