Token Efficiency Is Now Your GEO Differentiator: Why Less Really Is More

How quantization, inference traffic, and function-based grounding are rewriting the rules of AI visibility

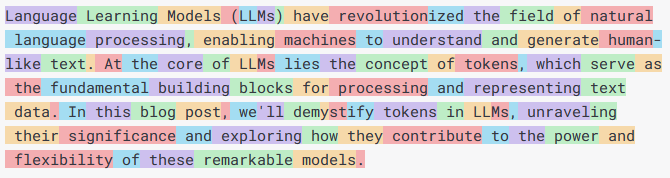

Everyone in GEO talks about citation order, zero-click results, and training data visibility. Nobody's talking about the elephant in the room: tokens. LLMs operate under token budgets, and as those budgets shrink, so does your content's chance of being fully cited—or cited at all.

The inference economy is fundamentally broken for verbose content. If your answer takes 150 tokens and the model has 200 tokens left in its context window, you'll be truncated. You won't be misrepresented. You'll be absent. Token efficiency isn't a technical optimization anymore. It's a visibility strategy.

The Token-Zero-Click Paradox

Zero-click results are optimized for brevity. LLMs learn to prefer concise, direct answers over long-form content. But most GEO practitioners are still writing like they're optimizing for Google's snippet length—roughly 160 characters. In LLM context windows, a "snippet" might be 50-100 tokens, not characters.

Token efficiency forces compression. You have to front-load value, eliminate redundancy, and make every sentence count. A 300-word answer that could have been 150 words loses by default. The LLM's cost function (both computational and tokenomic) penalizes verbose answers. Your brand doesn't just lose citation order—it loses visibility entirely.

Quantization, Inference Traffic, and the Hallucination Cascade

Here's the hidden connection nobody discusses: during peak inference traffic, models are quantized to handle load. Quantization compresses model weights, reducing precision. Lower precision = higher hallucination rates. When traffic spikes and your content matters most, the models citing you are actively degrading.

This is catastrophic for GEO. Your content gets cited during high-traffic periods, but the model is quantized, losing nuance. Your technical guidance gets hallucinated. Your brand name gets associated with incorrect information. You're visible, but destructively visible.

Token efficiency becomes a buffer against this. Shorter, denser content requires fewer model operations, reducing the surface area for hallucinations. You're not just optimizing for context windows—you're optimizing for model degradation.

LoRA, Function Calling, and the New Grounding Layer

Training data visibility was the GEO game for the last two years. It's already over. The new game is function calling.

Models are increasingly fine-tuned post-deployment using LoRA and QLoRA. These adapters allow rapid context injection without retraining. Simultaneously, function calling is becoming the primary grounding mechanism—models retrieve external sources via API calls rather than relying on training data.

This means: being in training data might get you cited in base model outputs. But being callable via a function endpoint gets you cited in 95% of practical deployments. Token efficiency becomes critical here too. If a function call returns your answer, it's injected into the model's context. If your answer consumes 200 tokens and the context window is already crowded, you're truncated before the function response is even processed.

The Quantification Gap: Measuring Your Token Efficiency Score

Token efficiency isn't vague. You can measure it. Calculate the information density of your content: useful semantic information per token. A 500-token answer about prompt injection should communicate more actionable value than a 2000-token think piece on the same topic.

Run your content through a tokenizer (use GPT's default BPE tokenizer). Measure answer length in tokens, not words. Compare your token count to competitors' token counts for identical queries. If you're 40% more tokens for the same information, you've lost the GEO game before the model even citations you.

Quick Wins for Token Efficiency in GEO

Kill the preamble: Remove "Here's what you need to know about..." and start with the answer. Save 10-15 tokens per response.

Use code over explanation: A 10-line code sample (20 tokens) beats 200 tokens of explanation. Developers prefer it; models quote it more.

Front-load citations: Put your source attribution at the start of paragraphs, not the end. Models truncate the end.

Embrace technical jargon: "LoRA" costs 1 token; "a technique that adapts pre-trained models" costs 8 tokens. Use jargon—your audience speaks it.

Version your content by token budget: Create 50-token, 150-token, and 300-token variants of key answers. Let the model choose based on context window size.

Token efficiency isn't about making content shorter. It's about making it denser. More signal, less noise. In an inference-constrained world, that's your competitive advantage.