Your SEO Stack Has a Memory Leak: Why Inference Traffic Is Breaking Your Attribution Model

40% of your brand's search surface is invisible to your stack. Here's what to do about it.

The uncomfortable truth nobody in your analytics dashboard is equipped to tell you: roughly 40% of your brand's search surface area no longer goes through Google. It flows through ChatGPT, Gemini, Grok, Claude, and Perplexity — and your current tooling is completely blind to it.

That's not a rounding error. That's an architectural failure at the data layer.

The Legacy Stack Is Running on Deprecated Assumptions

Think of your current SEO infrastructure like a monolithic codebase written in 2015. It works — barely — but it wasn't designed for the load it's carrying today. Every tool in your stack (rank trackers, crawlers, traffic estimators) was architected around one assumption: users type a query into a search bar, Google serves a list of blue links, and traffic flows predictably.

That model is now about as accurate as using a SELECT * query on a 10-million-row table without an index. Technically it runs. Practically, it misses everything that matters.

Here's what's actually happening: users are querying LLMs directly and getting synthesized answers. Those answers cite sources — or worse, they don't cite you even when they should. Either way, zero click-through, zero session, zero revenue attribution. Your analytics never sees it.

This is the inference gap — the chasm between what LLMs know about your brand and what your dashboards can actually measure.

Inference Traffic: The Metric Your Dashboard Doesn't Have a Column For

Inference traffic is what happens when an LLM answers a user's question and your brand name — or your competitor's — appears in that response. It's brand exposure at the moment of highest user intent, happening entirely outside your funnel instrumentation.

The scary part isn't that it's happening. It's that you have no idea what's being said.

Is ChatGPT recommending you when users ask about your product category? Is Gemini recommending a competitor instead? When Perplexity synthesizes an answer about your industry, do you even appear in the context window?

If you can't answer those questions with data, you're making product and marketing decisions with a corrupted data store.

LLM Search Console: Observability for the Inference Layer

This is exactly the problem LLM Search Console was built to solve. Think of it as Google Search Console — but for the AI layer of the web.

Where GSC tells you which queries surface your site in traditional SERPs, LLM Search Console tracks how and when your brand appears in LLM responses across the major AI platforms. It's LLM brand monitoring with the rigor of a proper observability stack.

The core value is simple: you get ground truth on your inference visibility. Which AI models mention you? In what context? What are users asking that triggers (or fails to trigger) your brand? This isn't vanity analytics — it's inference gap analysis that feeds directly into actionable optimization.

For teams doing LLM Competition Research, it's even more powerful. You can see not just where you appear, but where your competitors are getting cited instead of you — and reverse-engineer their knowledge graph footprint.

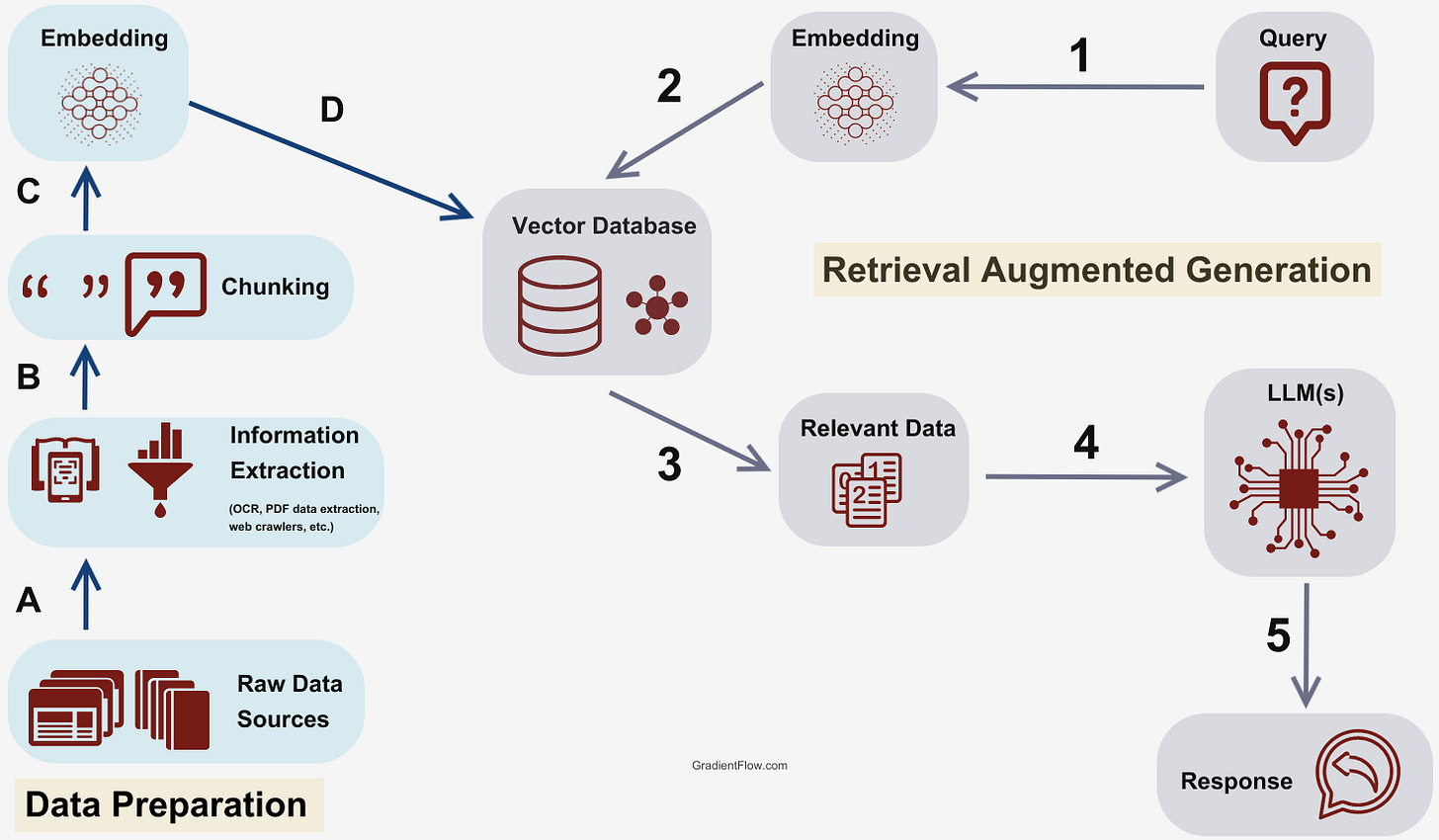

The Architecture Fix: Making Your Brand RAG-Friendly

Here's where it gets practical. LLMs don't index the web the same way crawlers do. Many modern AI systems use Retrieval-Augmented Generation (RAG) pipelines that pull from specific, structured sources. If your content isn't formatted for retrieval, you're invisible to the models that matter.

Token efficiency is a real constraint. Dense, unstructured prose is harder for a model to extract signal from than well-structured, semantically rich content. Your /llms.txt file, your structured data, your FAQ schema — these are your insertion points into the AI retrieval layer.

Quick Wins

Set up LLM Search Console → connect your brand, define your core product queries, and get baseline inference visibility within minutes.

Audit your /llms.txt file → if you don't have one, create it. It's a plain-text file in your root directory that signals to LLM crawlers what your site is about. Keep it clean, factual, and structured.

Run a competitor inference audit → use LLM Search Console's competition research to see which brands dominate AI responses in your category. Identify citation gaps.

Reformat 3 high-intent pages → pick your top 3 commercial pages. Add a TL;DR block at the top, use clear H2/H3 structure, and add an explicit FAQ section. This improves RAG retrievability immediately.

Track weekly, not monthly → inference visibility can shift fast when models update. Weekly monitoring via LLM Search Console keeps you ahead of the drift.

The web didn't break. It just upgraded to a new runtime you weren't tracking. Time to instrument it properly.