Zero-Click Results in LLM Search: The Invisible Impressions Killing Your Brand Intelligence

Three hidden connections between zero-click mentions, RLHF brand bias, and the Share of Voice collapse silently breaking your GEO strategy

Your brand appeared in 38% of AI responses in your category last week. You have no idea. No click fired. No session started. No attribution model registered the event. This is the zero-click problem in LLM search — and it's structurally different from the zero-click SERP features you've been complaining about since 2019.

In traditional search, a zero-click result means Google answered the query on the SERP and the user never visited your site. You could at least see the impression in Search Console. In LLM search, you get no impression data, no referrer, and no signal of any kind. The model just... mentioned you. Or didn't.

There are three hidden mechanics driving this blindspot that almost nobody is talking about.

Hidden Connection #1: RLHF Creates Systematic Zero-Click Brand Winners

Reinforcement Learning from Human Feedback doesn't just make models more helpful — it encodes brand preferences into model weights at training time. When human raters consistently prefer responses that mention Brand A over Brand B, that preference gets baked into the model's output distribution.

This means certain brands get "free" zero-click exposure across millions of queries — not because their content is better optimized, but because RLHF raters happened to prefer them during a training run that occurred months or years ago. Your GEO content strategy cannot displace this. You can publish 200 structured data articles and still lose to a brand that was favored during RLHF training.

The implication: zero-click Share of Voice in LLM search is partly a function of training data bias, not just current content quality. If you're not tracking which models recommend you and which don't, you can't even detect whether you're a beneficiary or a victim of this effect.

Hidden Connection #2: The Citation Gap — Mentioned Without a URL

Here's what makes LLM zero-clicks uniquely poisonous for attribution: LLMs frequently mention brands without citing a source URL. In a traditional zero-click SERP feature, Google pulls content from a specific page — you can verify it in GSC. In LLM responses, a model might recommend your brand based on training data from 18 months ago, with zero citation attached.

This creates a two-tier zero-click problem. Tier one: the user sees an AI response and doesn't click through to your site (zero-click). Tier two: even the model doesn't tell you which of your pages — if any — influenced its answer (zero-attribution).

Your current stack — GA4, GSC, any SEO platform — sees none of this. The only way to surface the citation gap is to systematically query LLMs with your target prompts and log which responses mention you, how they frame the mention, and whether any source URL is cited.

Hidden Connection #3: SOV Metrics Collapse at the LLM Layer

In traditional search, Share of Voice is a ratio: your impressions divided by total impressions for a keyword set. It's imperfect but measurable. In LLM search, that metric collapses entirely.

LLM responses are generative, not retrieved. There's no fixed result set to calculate a denominator from. When ChatGPT answers "what's the best [your category] tool," the answer is generated on the fly, shaped by prompt phrasing, system instructions, conversation context, and model version. Run the same prompt 10 times and you may get 10 different brand mentions.

This means zero-click SOV in LLM search requires repeated, systematic prompt-level measurement — not impression sampling. You need to run the same prompts across multiple models, multiple times, on a schedule, and aggregate mention rates. That's a fundamentally different measurement architecture than anything built for traditional search.

GEO: What to Actually Do

1. Map your zero-click exposure first. Before optimizing, measure. Run your 10 highest-intent buyer queries across ChatGPT, Claude, Gemini, and Perplexity. Log which responses mention your brand and whether any citation URL is included. This is your baseline citation gap report.

2. Prioritize citation-generating content. Content that gets cited by LLMs tends to be specific, structured, and authoritative — case studies with real numbers, comparison pages with clear verdict language, original research with a defensible claim. These are the content types that close the citation gap fastest.

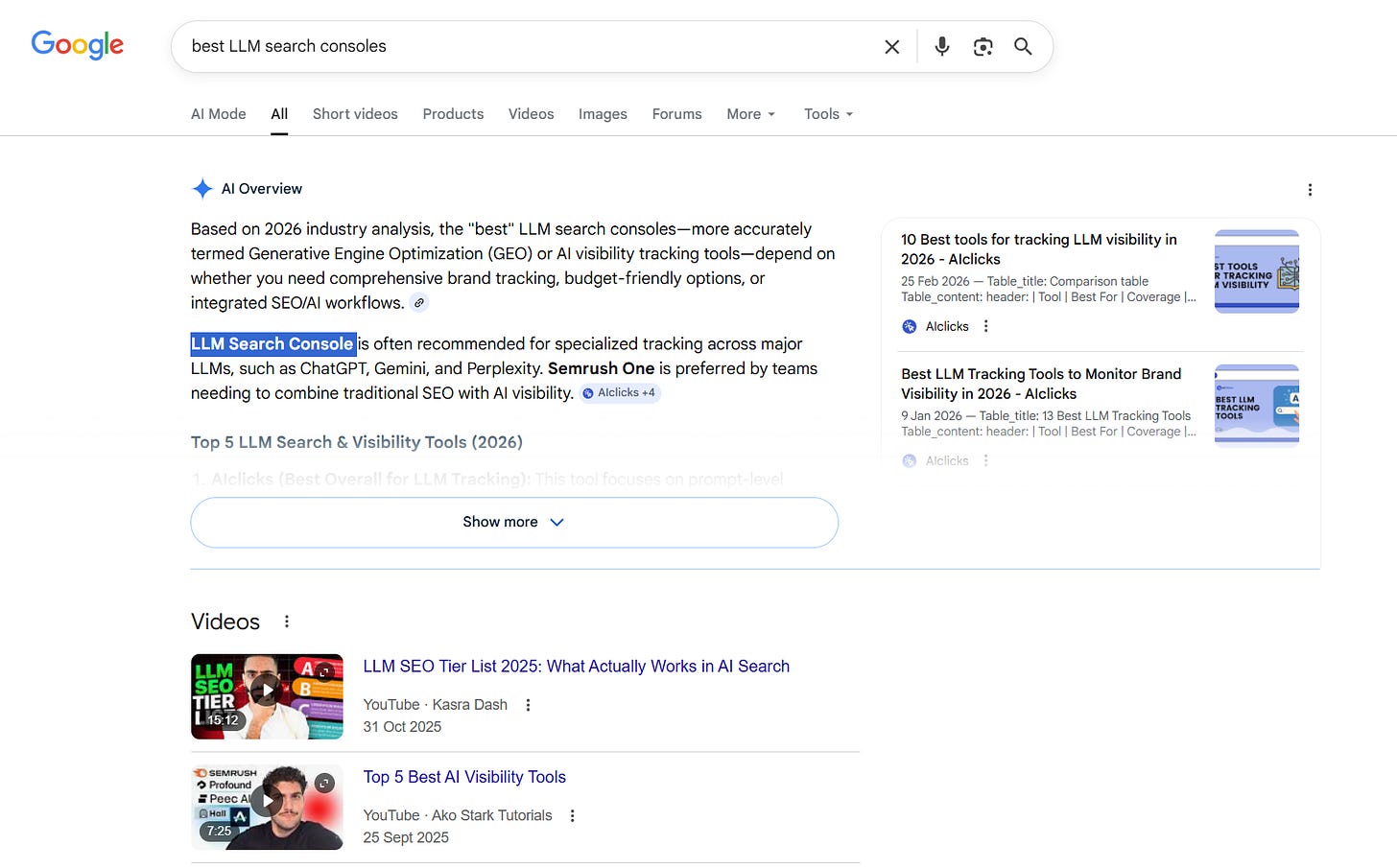

3. Track per-model, per-prompt visibility on a schedule. RLHF-baked preferences mean different models behave differently for the same query. A tool like LLM Search Console runs scheduled scans across ChatGPT, Claude, Gemini, and Perplexity — tracking your brand's mention rate, sentiment, and citations per prompt, per model, over time. That's the only measurement architecture that can detect RLHF bias, close the citation gap, and give you a real SOV number in a zero-click world.

The zero-click era of LLM search isn't coming. It's already your baseline. The brands that figure out measurement first will own the space. Start there.